AI Usage Governance for SMBs: The Zero-Cost Starting Point for Future-Ready Security

AI is already part of how your employees work, whether leadership formally approved it or not.

AI is already part of how your employees work, whether leadership formally approved it or not.

That shift did not happen through a structured rollout. It happened through everyday decisions. Someone used an AI tool to draft an email, another uploaded a document to summarize it, and a team experimented with new tools to move faster or reduce workload. In most SMB environments, this is happening quietly and without clear boundaries.

At the same time, 83% of SMBs say AI increases their cybersecurity risk, yet only 51% have implemented policies to address it. That gap is where most of the exposure is forming. The issue is not that employees are using AI. The issue is that they are doing it without consistent guidance.

Where AI Usage Becomes a Real Risk

Most AI-related risks do not stem from malicious behavior. It comes from normal workflow decisions made under time pressure.

An employee pastes sensitive information into a public AI tool to get a faster answer. A report is summarized using a platform that stores data externally. A recommendation is generated and used without verification. None of these actions feels like a security event in the moment. They feel efficient and practical.

The risk shows up later, often in ways that are harder to trace. If that information includes PHI, PCI data, financial records, or client data, the exposure is no longer internal. It becomes regulatory, contractual, and in some cases, reputational.

In many organizations, employees do not fully understand where those boundaries exist. They are making decisions in real time without a clear framework to guide them. That is where governance becomes essential.

Why This Is a Governance Issue, Not a Technology Issue

There is a natural tendency to treat AI risk as a technical problem that requires a technical solution. In reality, most SMBs do not need another tool to manage AI usage. They need clarity.

Without a defined approach, every employee is effectively making their own decisions about what is acceptable. That leads to inconsistency, and inconsistency leads to exposure. Governance provides a baseline that removes ambiguity and aligns behavior across the organization.

It answers simple but critical questions:

- Which tools are approved for use?

- What types of data can be used in those tools?

- What types of data should never be entered?

- When is additional review required?

- Who is responsible for maintaining and updating these guidelines?

These decisions do not need to be complex. But they do need to exist and be communicated clearly.

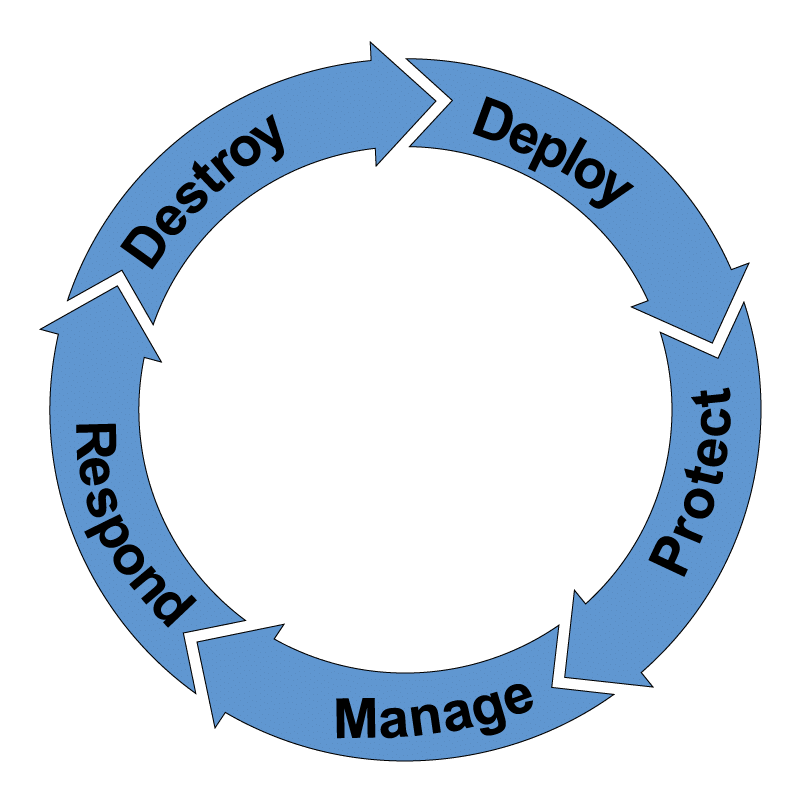

What Practical AI Governance Actually Looks Like

In practice, effective AI governance is less about formal documentation and more about usable guidance.

Policies need to be written so employees can apply them in real situations. If guidance is buried in technical or legal language, it will not influence behavior. Employees need to be able to quickly determine whether a task is appropriate for an AI tool and what precautions they should take.

Alignment with data sensitivity is also critical. Not all information carries the same level of risk, and governance should reflect that reality. Employees should understand the difference between general business information and regulated or confidential data, and how that distinction affects how tools are used.

Validation also plays an important role. AI-generated output should not be treated as final without review. This is not only a quality concern but a risk concern. Decisions based on incomplete or inaccurate information can create downstream issues that are difficult to correct.

Ownership is often overlooked, but it is essential. Someone within the organization needs to be responsible for defining, updating, and reinforcing how AI is used. Without ownership, policies tend to remain static while tools and risks continue to evolve.

Where Organizations Tend to Get Stuck

The most common challenge is not resistance. It is uncertainty about where to begin.

Many SMB leaders recognize that AI introduces new risk, but the issue feels broad and difficult to define. That often leads to hesitation, and in the meantime, employees continue using tools without guidance.

What tends to work best is starting with a simple, practical approach. Define a baseline policy that addresses the most common use cases. Communicate it clearly. Reinforce it through training and real-world examples. Then adjust it as new situations emerge.

This creates momentum without overwhelming the organization and allows governance to evolve alongside usage.

Connecting AI Governance to Real Exposure

Governance does not exist in isolation. The way AI is used internally can directly affect how an organization appears externally.

If data is being processed or exposed through unmanaged tools, it can create visibility in places leadership does not expect. That can influence how the organization is evaluated by regulators, insurers, and clients.

Understanding that an external perspective helps determine whether governance is actually working. It also helps prioritize where attention should be focused, especially in environments with limited resources.

Moving Forward with a Practical Approach

Implementing AI governance doesn’t necessarily require a significant investment – it primarily needs alignment and consistency.

Organizations that take the time to define clear guidelines for AI usage, communicate those expectations effectively, and reinforce them through ongoing training are better positioned to manage risks. Over time, this approach can become integrated into the organization’s regular operations rather than being treated as a separate initiative.

The objective is not to eliminate risk entirely, but to ensure that decisions are made consciously and that employees understand their roles in safeguarding the organization. To get started, consider an executive-level Resilience360 conversation. This is a non-committal way to gain insight into your current AI governance capabilities and needs.